Microsoft’s BitNet b1.58 Launches with a Bang: A Faster, More Energy-Efficient 1-Bit AI Model?

The pace of AI development is dizzying, but so is the growing “appetite” of these models. Let’s take a look at Microsoft’s latest BitNet b1.58 2B4T model, a “1.58-bit” large language model (LLM) that aims to strike a balance between performance and extraordinary efficiency—and why it could be a game-changer in the AI world.

Have you ever wished AI models could be both smart and energy-efficient? As large language models (LLMs) grow increasingly powerful, so do their demands on computational resources like memory and electricity. It’s like owning a super-smart pet that eats a mountain of food every day.

But there’s good news from Microsoft Research: they’ve introduced a model called BitNet b1.58 2B4T. This isn’t your typical LLM—it’s the first open-source, native 1.58-bit LLM, with a parameter size in the 2 billion range. Sounds technical? Don’t worry, here’s the simple version: this model could signal the beginning of a faster, leaner, and more affordable era of AI.

➡️ Official inference code: microsoft/BitNet (bitnet.cpp)

What Kind of Sorcery Is “1.58-bit”?

Most model parameters are stored using precise numerical formats (like 16-bit or 32-bit floats). That’s like painting with many colors—lots of detail, but big file sizes.

BitNet takes a radically different approach with something called 1.58-bit quantization. In essence, it simplifies the model’s internal values (weights) to just three numbers: -1, 0, +1. Think of it like turning a full-color photo into a minimalist grayscale sketch.

Why is this awesome?

- Drastically reduced memory usage: The model file becomes tiny! Just check the comparison table below—BitNet is featherweight.

- Faster computation: Simpler math means faster processing and lower latency.

- Lower energy consumption: Less computation = less power = more eco-friendly and cheaper to run.

Most importantly, BitNet isn’t a case of shrinking down a fully trained model (that’s called post-training quantization). Instead, it’s trained from the ground up using the 1.58-bit format. That’s like being a martial arts prodigy born with unique talent—not someone who trained later to squeeze into tight spaces. This allows it to retain “smarts” while being ultra-efficient.

Meet BitNet b1.58 2B4T

Now let’s get to know the model a bit more:

- Size: Around 2 billion parameters. Not the largest, but it packs a punch for its class.

- Training data: Trained on 4 trillion tokens—including public text, code, and synthetic math data. No wonder it’s so capable.

- Architecture: Based on the Transformer framework, but “modded” with Microsoft’s custom

BitLinear layers. It also uses popular LLM features like RoPE (rotary positional embeddings), ReLU² activation, and subln normalization. For extreme simplicity, it has no bias terms in linear and normalization layers.

- Quantization specs: Uses W1.58A8 configuration—1.58-bit weights (represented as {-1, 0, +1}) and 8-bit activations.

- Context length: Supports sequences up to 4096 tokens. Microsoft recommends pre-training on long sequences if you need super long context handling.

- Training pipeline:

- Pretraining: Foundation training on massive data.

- Supervised Fine-Tuning (SFT): Teaches the model to follow instructions and converse.

- Direct Preference Optimization (DPO): Aligns the model with human preferences for more helpful responses.

- Tokenizer: Uses the same tokenizer as LLaMA 3, with a vocabulary size of 128,256 tokens.

How Good Is It? Let’s Compare

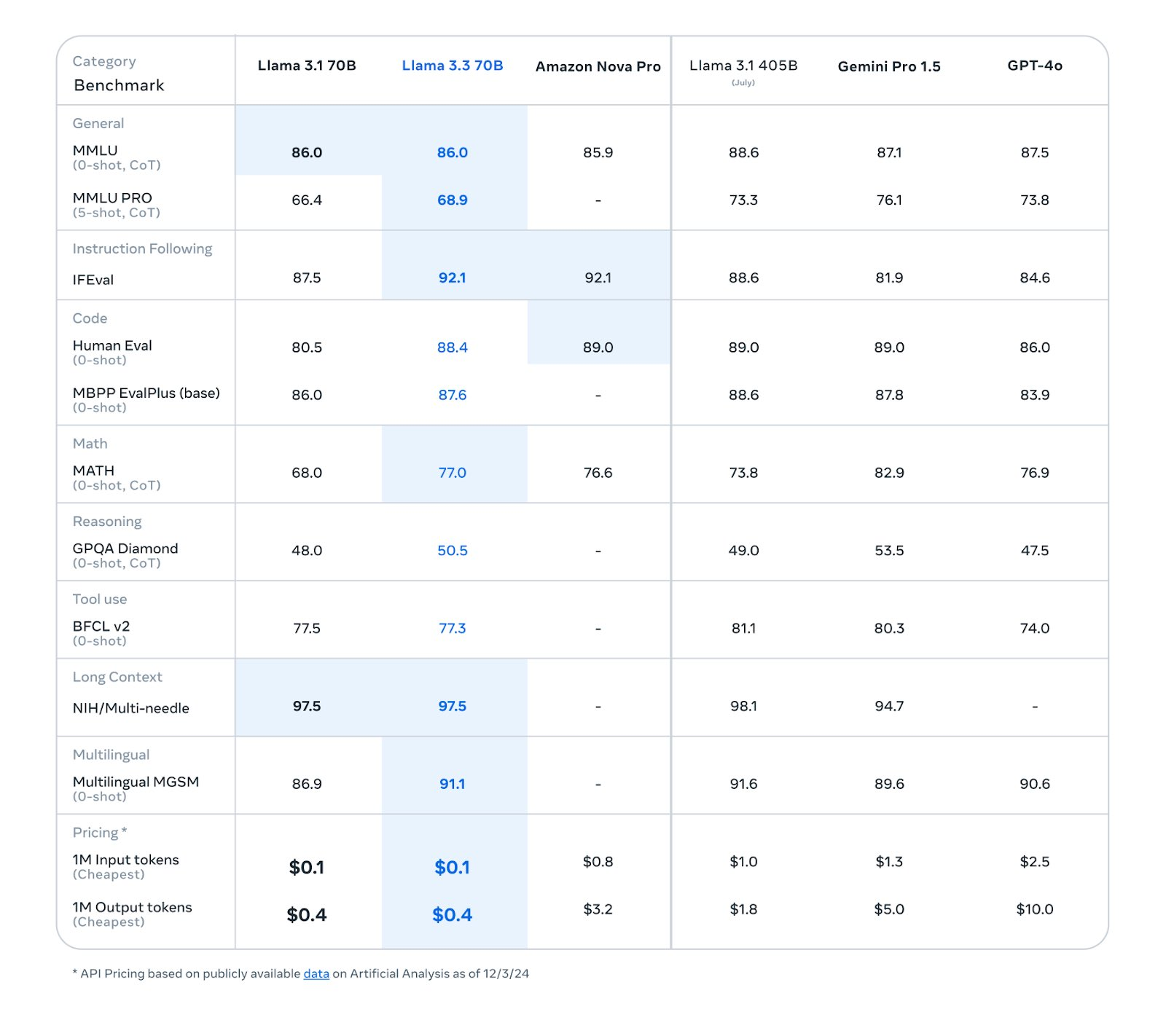

So how does BitNet b1.58 stack up against other open-source, full-precision models in the same size class (e.g., LLaMA 3.2 1B, Gemma-3 1B, Qwen2.5 1.5B)? Quite impressively:

| Metric |

LLaMA 3.2 1B |

Gemma-3 1B |

Qwen2.5 1.5B |

SmolLM2 1.7B |

MiniCPM 2B |

BitNet b1.58 2B |

| Memory (non-embedding) |

2GB |

1.4GB |

2.6GB |

3.2GB |

4.8GB |

0.4GB (!!) |

| Latency (CPU decoding) |

48ms |

41ms |

65ms |

67ms |

124ms |

29ms (blazing!) |

| Energy (estimated) |

0.258J |

0.186J |

0.347J |

0.425J |

0.649J |

0.028J (wow!) |

| Benchmark Avg. |

44.90 |

43.74 |

55.23 |

48.70 |

42.05 |

54.19 (very close) |

| MMLU |

45.58 |

39.91 |

60.25 |

49.24 |

51.82 |

53.17 |

| GSM8K (math reasoning) |

38.21 |

31.16 |

56.79 |

45.11 |

4.40 |

58.38 (winner!) |

| MATH-500 (math reasoning) |

23.00 |

42.00 |

53.00 |

17.60 |

14.80 |

43.40 |

Note: LLaMA 3.2 1B used pruning and distillation. Gemma-3 1B also used distillation.

What can we learn from the chart?

- Efficiency beast: BitNet blows away the competition in memory, latency, and energy use. 0.4GB RAM usage is less than many mobile apps. And 29ms decoding on CPU? That’s fast.

- Strong performance: BitNet performs on par with larger full-precision models across benchmarks like MMLU, GSM8K, and MATH. It even takes the top spot in GSM8K. It slightly trails Qwen2.5 in average score, but given its efficiency, the tradeoff is more than worth it.

It’s like driving a super fuel-efficient car—it may not be the fastest on the track, but it’s shockingly effective overall.

Want to Try It? Read This First

Ready to test it out? Hold on—there are a few key things you should know.

⚠️ Very Important Warning About Efficiency ⚠️

WARNING! WARNING! WARNING! If you plan to run BitNet using the popular Hugging Face transformers library, do NOT expect the ultra-fast, low-latency, power-saving results mentioned above.

Why? Because transformers does not yet support optimized low-level operations for BitNet. Running it there might feel as slow or even slower than running a regular full-precision model!

While memory usage will likely decrease, you’ll only see the real computational efficiency using the official C++ implementation: bitnet.cpp

So if you’re chasing maximum efficiency, head over to bitnet.cpp and follow the instructions to build and run it properly.

Choosing the Right Model Version:

Several versions are available on Hugging Face. Here’s how to choose:

Testing with transformers (experimental only, not efficient):

If you’re just curious and don’t mind the lack of speed/efficiency, you can try this unofficial transformers branch:

pip install git+https://github.com/shumingma/transformers.git

Sample Python code:

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "microsoft/bitnet-b1.58-2B-4T"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16 # Required for loading

)

messages = [

{"role": "system", "content": "You are a helpful AI assistant."},

{"role": "user", "content": "How are you?"}

]

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

chat_input = tokenizer(prompt, return_tensors="pt").to(model.device)

chat_outputs = model.generate(**chat_input, max_new_tokens=50)

response = tokenizer.decode(chat_outputs[0][chat_input['input_ids'].shape[-1]:], skip_special_tokens=True)

print("\nAssistant Response:", response)

Once Again: For Efficiency, Use bitnet.cpp!

Language Limitation: English Only

At this point, BitNet b1.58 2B4T only understands English. It may not comprehend or respond properly to Chinese or other languages. Keep this in mind before using it.

Who Should Use It? Final Notes

BitNet might be perfect for:

- AI researchers: Exploring the future of low-bit model training and deployment.

- Efficiency-obsessed developers: Those needing LLMs to run on limited-resource devices.

- Cost-conscious teams or individuals: Lower memory and power requirements = reduced server costs.

Good news: The model and code are released under the MIT License, so you can use and modify them freely. Nice!

That said, this is still a research/development model. Despite SFT and DPO alignment, it may still produce unexpected, biased, or inaccurate outputs. Use it responsibly and with critical judgment.

In Conclusion: A Major Step Toward Lightweight AI?

So, what does BitNet b1.58 2B4T represent? Not just another LLM—it’s a proof of concept that we don’t always need the biggest, most resource-hungry models. With native low-bit training like 1.58-bit quantization, we can potentially keep decent performance while slashing the cost of running AI.

That could mean wider AI adoption, especially in resource-constrained environments—and maybe even a smaller carbon footprint for the whole industry. Of course, this is just the beginning. We’re likely to see even more powerful and efficient models built on similar principles.

BitNet is like opening a new lane on the AI superhighway—one that’s leaner, greener, and surprisingly powerful. Where it leads next? That’s the exciting part.