Introduction

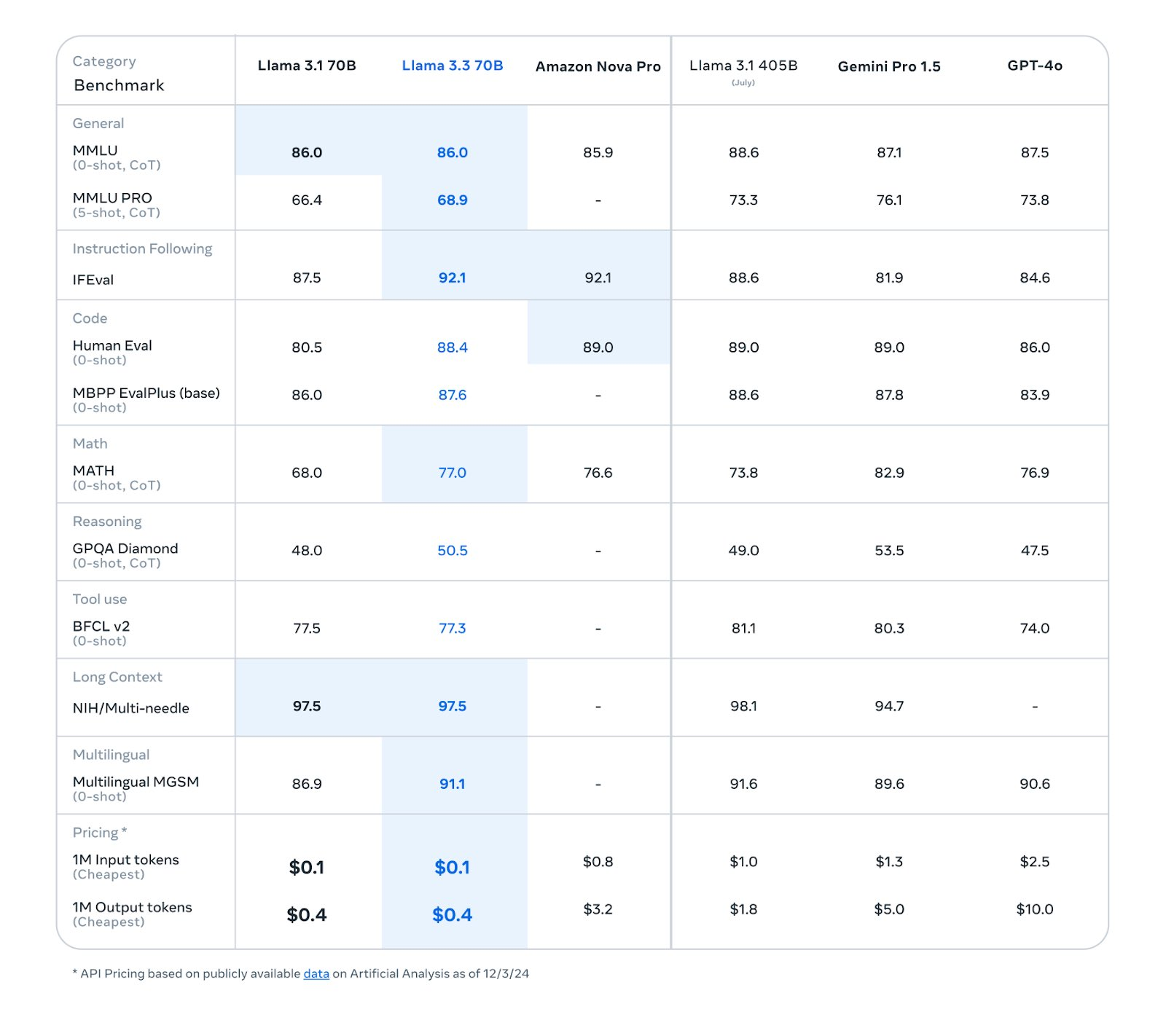

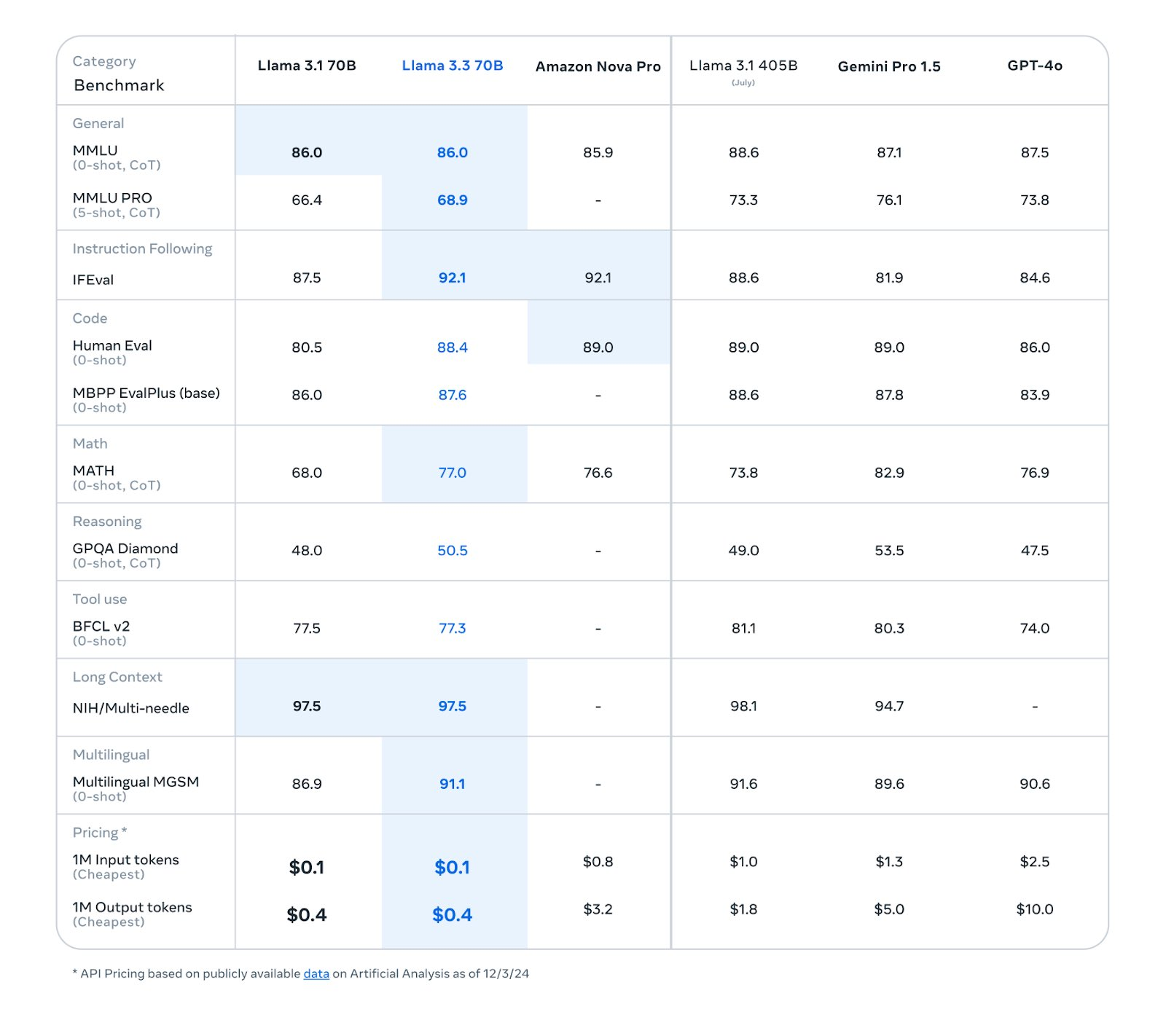

Meta has unveiled the Llama 3.3 70B model, which uses groundbreaking innovations to challenge traditional size limits. With less than one-fifth of the parameters of the Llama 3.1 405B model, it delivers nearly the same level of performance. This represents a major breakthrough in performance and cost efficiency for developers. This article explores the features, use cases, and impact of this model on developers.

Image credit: Ollama Library

Technical Breakthroughs of Llama 3.3 70B

Not Just Adding Parameters

Llama 3.3 showcases unprecedented performance in tasks like reading comprehension, math, and general knowledge using advanced fine-tuning techniques. Unlike other models, Meta challenges the “scaling plateau” by leveraging optimized architecture to achieve significant performance jumps without merely increasing parameters.

- Versatile Functions: From explanation to tool reading, everything is fine-tuned.

- Smaller Model Size: Efficiency is achieved with smaller units for each computation.

These improvements allow Llama 3.3 70B to rival larger models, redefining AI development’s perception of size and efficiency.

Impact on Developers

Llama 3.3 70B delivers notable advancements across multiple areas:

- Code Support: Offers stronger error handling and advanced debugging tools.

- Chain of Thought Reasoning: Produces more accurate step-by-step responses.

- Language Coverage: Supports up to 8 languages with improved output precision.

These improvements are particularly crucial for developers needing efficient, high-accuracy tools.

Highlighted Features

- JSON Output: Simplifies function calls and data transmission.

- Step-by-Step Reasoning: Ensures higher accuracy in math calculations and complex tasks.

- Programming Language Support: Comprehensive support for major programming languages.

- Multi-Language Support: Includes English, French, German, Spanish, Italian, Portuguese, Thai, and Hindi.

These features enable Llama 3.3 to cater to diverse commercial and research needs, especially in multilingual dialogue and natural language generation.

Overview of the Llama Series

If you’re new to the rapid evolution of the Llama family, here’s a summary of its key milestones:

- Llama 3.1 Series: Included models with 8B, 70B, and 405B parameters, released in July 2024, designed for text applications.

- Llama 3.2 Series: Focused on lightweight models for edge deployment (1B and 3B parameters) and multi-modal models (11B Vision and 90B Vision), launched in September 2024.

- Llama 3.3 70B: Focused on text tasks, enhancing reasoning, math, general knowledge, and instruction following.

Use Cases of the New Model

Commercial and Research Applications

Llama 3.3 is designed for multilingual assistant-based conversation applications and natural language generation tasks. For example:

- Enhancing automation tools like hypertext generation and event parsing.

- Generating data for high-quality replication and analysis.

Linguistic Studies and Customization

While Llama 3.3 currently supports 8 languages, developers can fine-tune the model for specific languages, adhering to community licenses and responsible use policies.

Conclusion

Llama 3.3 70B is more than just a technological breakthrough; it signifies a new era of “quality balance” in AI, offering developers and enterprises unparalleled opportunities for deployment!

Sources