Introduction

In 2024, AI models have advanced rapidly, and the launch of the Gemini 2.0 series marks a new milestone in AI technology. Google recently announced the official release of Gemini 2.0 Flash, Gemini 2.0 Pro, and Gemini 2.0 Flash-Lite, bringing major improvements in handling complex tasks, increasing speed, and reducing costs.

This article will explore the updates in Gemini 2.0, key features, performance comparisons of different versions, and how these AI models can enhance development efficiency.

Image Source: https://blog.google/technology/google-deepmind/gemini-model-updates-february-2025/

1.1 What is Gemini 2.0 Flash?

Gemini 2.0 Flash was first introduced at the 2024 Google I/O Developer Conference. With high computational efficiency and multi-modal processing capabilities, it quickly became a popular choice among developers.

This model features a 1 million token context window, allowing it to process large amounts of information. It is ideal for high-frequency, high-throughput AI tasks such as real-time customer support, automatic summarization, and large-scale content generation.

1.2 Key Features of Gemini 2.0 Flash

- Low latency, high efficiency: Suitable for applications requiring fast responses.

- Powerful multi-modal understanding: Supports text, images, and more.

- Optimized for large-scale data processing: Perfect for AI-powered customer service, real-time translation, and more.

1.3 How to Use Gemini 2.0 Flash?

Developers can now access Gemini 2.0 Flash through Google AI Studio and Vertex AI for production use.

2. Gemini 2.0 Pro: Optimized for Coding and Complex Problem Solving

2.1 What is Gemini 2.0 Pro?

Gemini 2.0 Pro is one of Google’s most powerful AI models, designed for programming, mathematical reasoning, and knowledge analysis. It delivers more precise answers even in complex scenarios.

With a 2 million token context window, this model can directly access Google Search and code execution tools, significantly improving development and data analysis efficiency.

2.2 Key Features of Gemini 2.0 Pro

- Best-in-class code generation: Supports Python, SQL, JavaScript, and more.

- Advanced knowledge reasoning and analysis: Ideal for financial risk assessment, data analysis, and complex problem-solving.

- 2 million token context window: Handles large-scale text processing with ease.

2.3 How Can Gemini 2.0 Pro Improve Developer Efficiency?

- AI-powered assistants: Ideal for smart customer service, chatbots, and more.

- Optimized data analysis: Helps process reports, legal documents, and more.

- Advanced coding and debugging: Provides automated coding suggestions and optimizes code quality.

3. Gemini 2.0 Flash-Lite: The Most Cost-Effective AI Model

3.1 What is Gemini 2.0 Flash-Lite?

Gemini 2.0 Flash-Lite is the most cost-efficient AI model, offering low-cost, high-performance AI services.

Compared to 1.5 Flash, 2.0 Flash-Lite provides better processing capabilities while maintaining the same speed and cost, making it ideal for businesses developing large-scale AI services.

3.2 Key Features of Gemini 2.0 Flash-Lite

- Lowest cost: Ideal for AI-driven applications such as news summarization and automated content generation.

- 1 million token context window: Can handle longer content processing.

- Multi-modal input support: Works with text, images, and other formats.

4. Security and Responsibility: AI Risk Management in Gemini 2.0

As AI technology advances, Google has also strengthened the security measures in Gemini 2.0, including:

- New reinforcement learning techniques: Ensures more accurate AI responses and reduces misinformation.

- Automated risk assessment system: Protects against security threats like prompt injection.

- Enhanced data privacy and user protection: Ensures compliance with global privacy regulations.

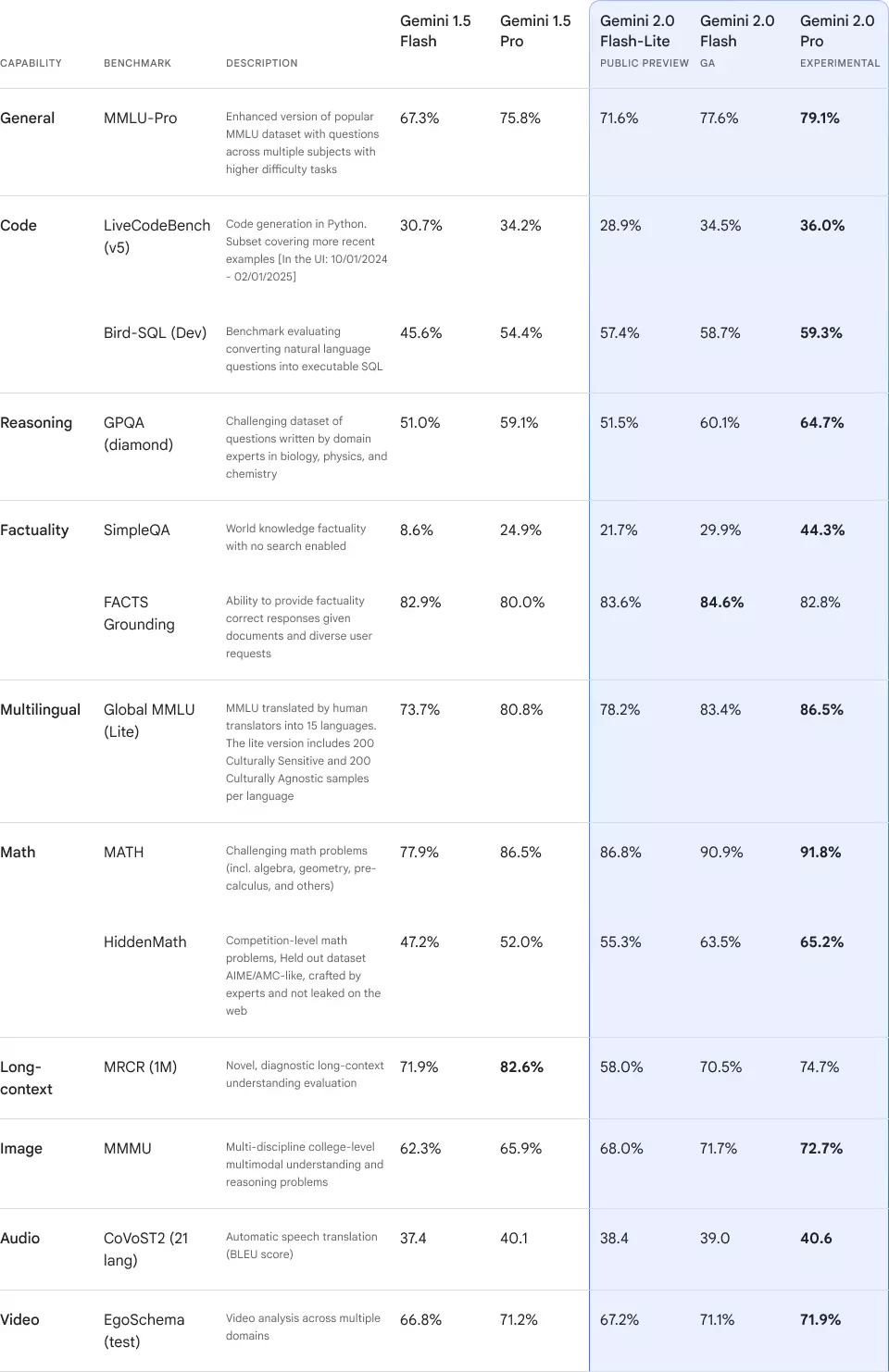

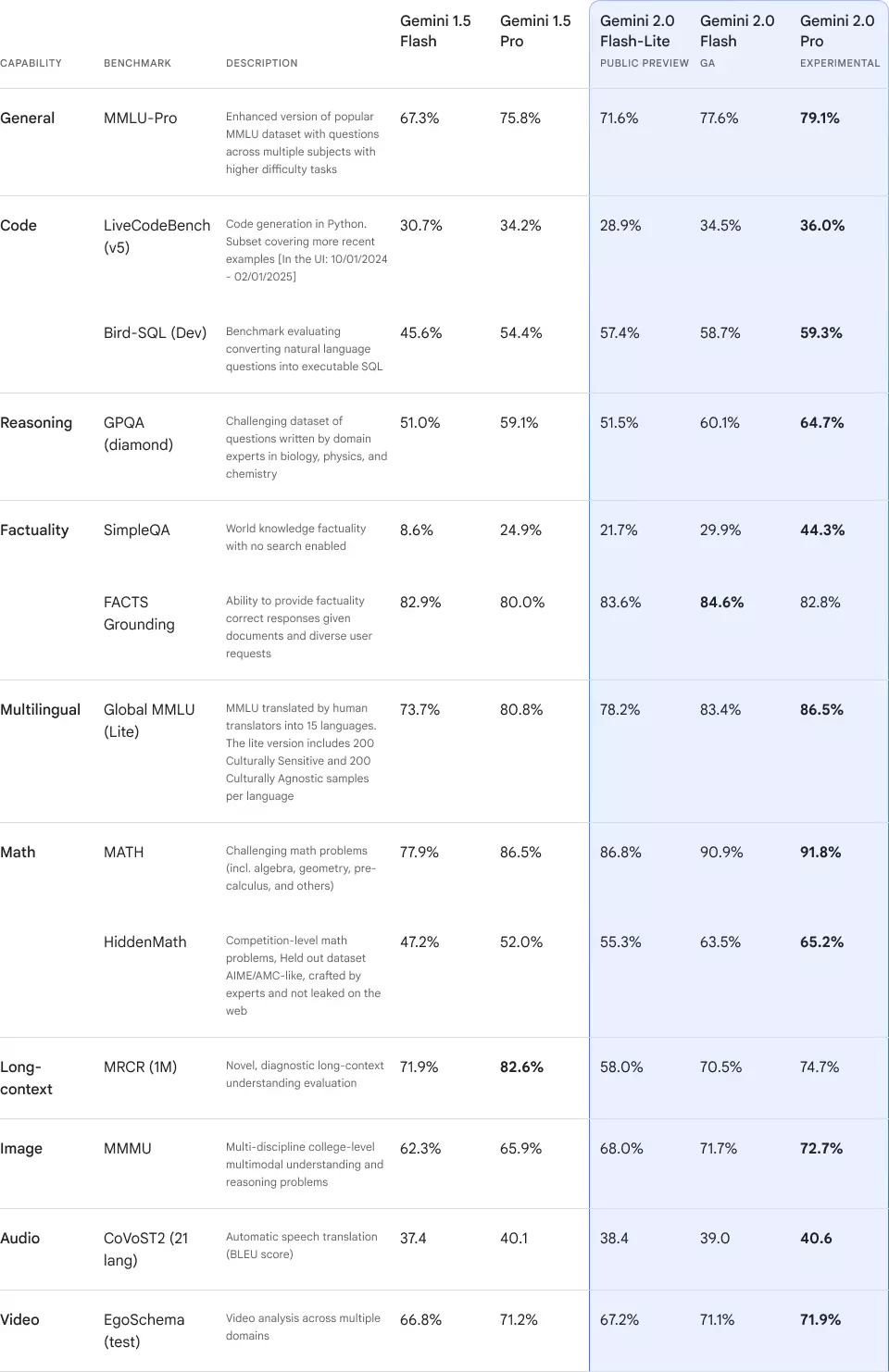

Here’s how different Gemini 2.0 versions perform across various benchmarks:

| Capability |

Benchmark |

Description |

Gemini 1.5 Flash |

Gemini 1.5 Pro |

Gemini 2.0 Flash-Lite (Preview) |

Gemini 2.0 Flash GA |

Gemini 2.0 Pro (Experimental) |

| General |

MMLU-Pro |

Enhanced MMLU dataset with higher difficulty across subjects |

67.3% |

75.8% |

71.6% |

77.6% |

79.1% |

| Code |

LiveCodeBench (v5) |

Python code generation with newer examples [UI: 10/01/2024 - 02/01/2025] |

30.7% |

34.2% |

28.9% |

34.5% |

36.0% |

| |

Bird-SQL (Dev) |

Evaluates converting natural language questions into executable SQL |

45.6% |

54.4% |

57.4% |

58.7% |

59.3% |

| Reasoning |

GPQA (diamond) |

Challenging expert-written questions in biology, physics, and chemistry |

51.0% |

59.1% |

51.5% |

60.1% |

64.7% |

| Factuality |

SimpleQA |

World knowledge factuality with no search |

8.6% |

24.9% |

21.7% |

29.9% |

44.3% |

| |

FACTS Grounding |

Provides factually correct responses based on documents |

82.9% |

80.0% |

83.6% |

84.6% |

82.8% |

| Multilingual |

Global MMLU (Lite) |

MMLU translated into 15 languages by human translators |

73.7% |

80.8% |

78.2% |

83.4% |

86.5% |

| Math |

MATH |

Challenging math problems (algebra, geometry, pre-calculus, etc.) |

77.9% |

86.5% |

86.8% |

90.9% |

91.8% |

| |

HiddenMath |

Competition-level math problems, crafted by experts |

47.2% |

52.0% |

55.3% |

63.5% |

65.2% |

| Long-Context |

MRCR (1M) |

Novel diagnostic long-context understanding evaluation |

71.9% |

82.6% |

58.0% |

70.5% |

74.7% |

| Image |

MMMU |

Multi-discipline college-level multimodal understanding |

62.3% |

65.9% |

68.0% |

71.7% |

72.7% |

| Audio |

CoVoST2 (21 lang) |

Automatic speech translation (BLEU score) |

37.4 |

40.1 |

38.4 |

39.0 |

40.6 |

| Video |

EgoSchema (test) |

Video analysis across multiple domains |

66.8% |

71.2% |

67.2% |

71.1% |

71.9% |

6. FAQ: Frequently Asked Questions

Q1: When will Gemini 2.0 be available?

Gemini 2.0 Flash is now accessible on Google AI Studio and Vertex AI.

Q2: Which version is best for developers?

For simple AI tasks, use 2.0 Flash-Lite. For advanced AI computing and programming, choose 2.0 Pro.

Q3: Is Gemini 2.0 suitable for enterprise applications?

Yes! Gemini 2.0 provides enterprise-grade AI solutions for customer service, data analysis, content generation, and more.

Links